The Importance Of Deepseek

페이지 정보

본문

Why is DeepSeek - hedgedoc.eclair.ec-lyon.fr - Important? OpenAI's o3: The grand finale of AI in 2024 - covering why o3 is so impressive. Much of the content material overlaps substantially with the RLFH tag protecting all of put up-training, however new paradigms are starting within the AI area. There’s a very clear pattern here that reasoning is rising as an vital subject on Interconnects (proper now logged as the `inference` tag). The tip of the "best open LLM" - the emergence of different clear dimension classes for open fashions and why scaling doesn’t deal with everyone in the open mannequin audience. In 2025 this will be two totally different categories of protection. Two years writing every week on AI. ★ Tülu 3: The following period in open publish-training - a mirrored image on the previous two years of alignment language fashions with open recipes. And while some issues can go years with out updating, it's important to understand that CRA itself has a number of dependencies which have not been updated, and have suffered from vulnerabilities. AI for the rest of us - the significance of Apple Intelligence (that we nonetheless don’t have full entry to).

Why is DeepSeek - hedgedoc.eclair.ec-lyon.fr - Important? OpenAI's o3: The grand finale of AI in 2024 - covering why o3 is so impressive. Much of the content material overlaps substantially with the RLFH tag protecting all of put up-training, however new paradigms are starting within the AI area. There’s a very clear pattern here that reasoning is rising as an vital subject on Interconnects (proper now logged as the `inference` tag). The tip of the "best open LLM" - the emergence of different clear dimension classes for open fashions and why scaling doesn’t deal with everyone in the open mannequin audience. In 2025 this will be two totally different categories of protection. Two years writing every week on AI. ★ Tülu 3: The following period in open publish-training - a mirrored image on the previous two years of alignment language fashions with open recipes. And while some issues can go years with out updating, it's important to understand that CRA itself has a number of dependencies which have not been updated, and have suffered from vulnerabilities. AI for the rest of us - the significance of Apple Intelligence (that we nonetheless don’t have full entry to).

Washington needs to control China’s access to H20s-and put together to do the identical for future workaround chips. ChatBotArena: The peoples’ LLM analysis, the way forward for evaluation, the incentives of analysis, and gpt2chatbot - 2024 in analysis is the year of ChatBotArena reaching maturity. 2025 might be one other very interesting 12 months for open-supply AI. I hope 2025 to be related - I know which hills to climb and will proceed doing so. I’ll revisit this in 2025 with reasoning models. "There’s a technique in AI referred to as distillation, which you’re going to hear quite a bit about, and it’s when one mannequin learns from one other mannequin, effectively what happens is that the student model asks the dad or mum model quite a lot of questions, identical to a human would be taught, but AIs can do this asking millions of questions, and they will primarily mimic the reasoning process they study from the mum or dad model and they'll kind of suck the data of the dad or mum mannequin," Sacks told Fox News.

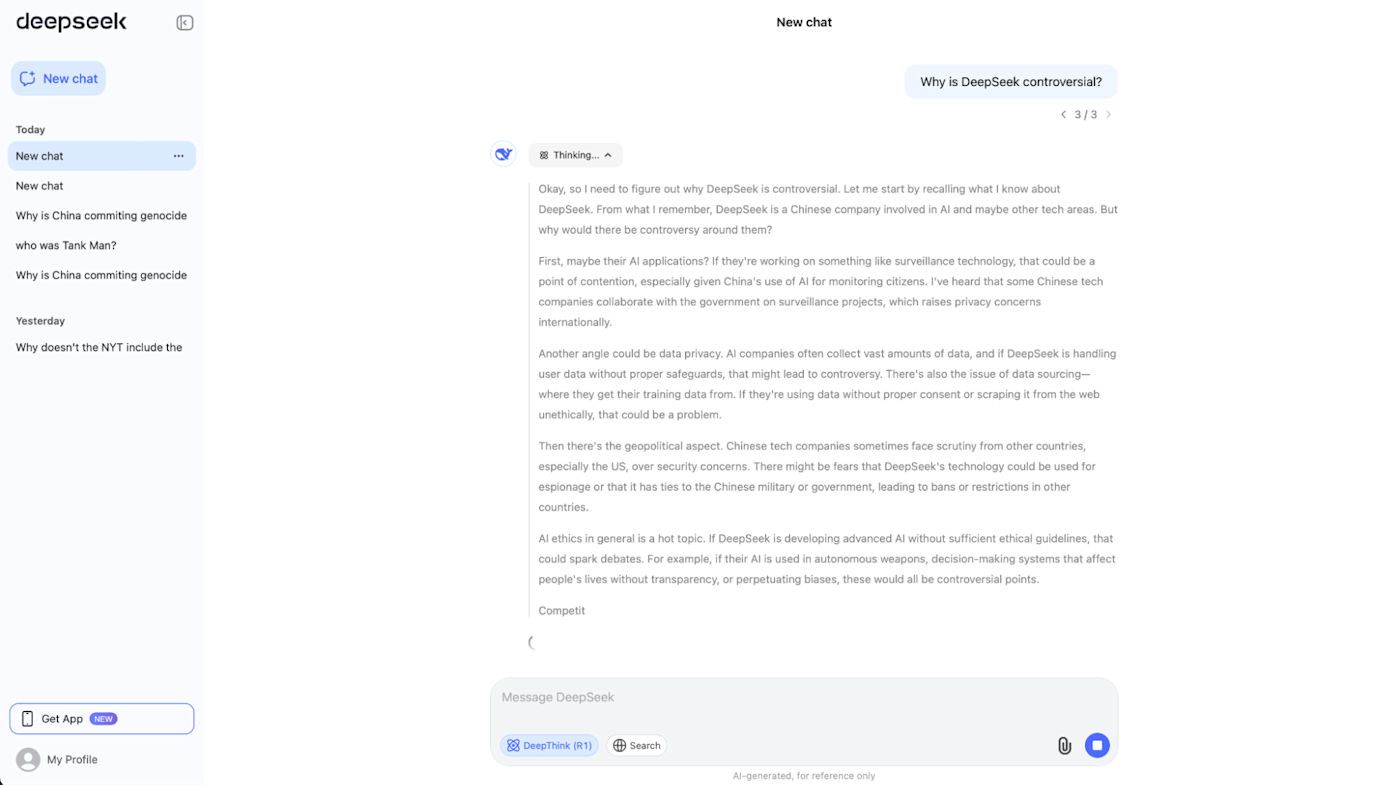

I suspect that what drove its widespread adoption is the way it does seen reasoning to arrive at its reply. "Reinforcement learning is notoriously tough, and small implementation variations can result in major performance gaps," says Elie Bakouch, an AI research engineer at HuggingFace. Reinforcement studying is a type of machine learning where an agent learns by interacting with an setting and receiving suggestions on its actions. Using a cellphone app or pc software program, customers can sort questions or statements to DeepSeek and it'll respond with text solutions. To seek out out, we queried 4 Chinese chatbots on political questions and in contrast their responses on Hugging Face - an open-supply platform where builders can upload models which can be topic to much less censorship-and their Chinese platforms where CAC censorship applies extra strictly. ★ The koan of an open-source LLM - a roundup of all the problems dealing with the idea of "open-supply language models" to start out in 2024. Coming into 2025, most of these nonetheless apply and are mirrored in the rest of the articles I wrote on the topic.

Gives you a tough idea of some of their training knowledge distribution. ★ A submit-coaching method to AI regulation with Model Specs - essentially the most insightful coverage idea I had in 2024 was round the best way to encourage transparency on model behavior. Saving the National AI Research Resource & my AI policy outlook - why public AI infrastructure is a bipartisan subject. In terms of views, writing on open-source strategy and coverage is less impactful than the opposite areas I mentioned, however it has quick affect and is read by policymakers, as seen by many conversations and the quotation of Interconnects in this House AI Task Force Report. Let me read via it again. The likes of Mistral 7B and the first Mixtral have been major occasions in the AI community that had been utilized by many firms and teachers to make immediate progress. This yr on Interconnects, I printed 60 Articles, 5 posts in the brand new Artifacts Log series (subsequent one soon), 10 interviews, transitioned from AI voiceovers to real learn-throughs, handed 20K subscribers, expanded to YouTube with its first 1k subs, and earned over 1.2million web page-views on Substack. You may see the weekly views this yr below. We see the progress in effectivity - faster technology speed at lower value.

- 이전글يدعم تشغيل ملفات الموسيقى وتنزيل الخلفيات 25.02.10

- 다음글تحميل واتس اب الذهبي 25.02.10

댓글목록

등록된 댓글이 없습니다.