Is that this Deepseek Thing Actually That hard

페이지 정보

본문

The inaugural version of DeepSeek laid the groundwork for the company’s progressive AI expertise. Roubini views expertise as a current financial driver, citing quantum computing automation, robotics, and fintech as "the industries of the future." He suggests these innovations could potentially increase progress to 3% by this decade's finish. From the foundational V1 to the high-performing R1, DeepSeek has consistently delivered models that meet and exceed business expectations, solidifying its place as a pacesetter in AI expertise. As ZDNET's Radhika Rajkumar detailed on Monday, R1's success highlights a sea change in AI that would empower smaller labs and researchers to create aggressive fashions and diversify the field of obtainable options. In interviews they've accomplished, they seem like smart, curious researchers who simply want to make useful technology. Artificial Intelligence (AI) has emerged as a sport-changing expertise throughout industries, and the introduction of DeepSeek AI is making waves in the worldwide AI landscape. • We will constantly explore and iterate on the deep pondering capabilities of our fashions, aiming to boost their intelligence and downside-solving skills by expanding their reasoning size and depth.

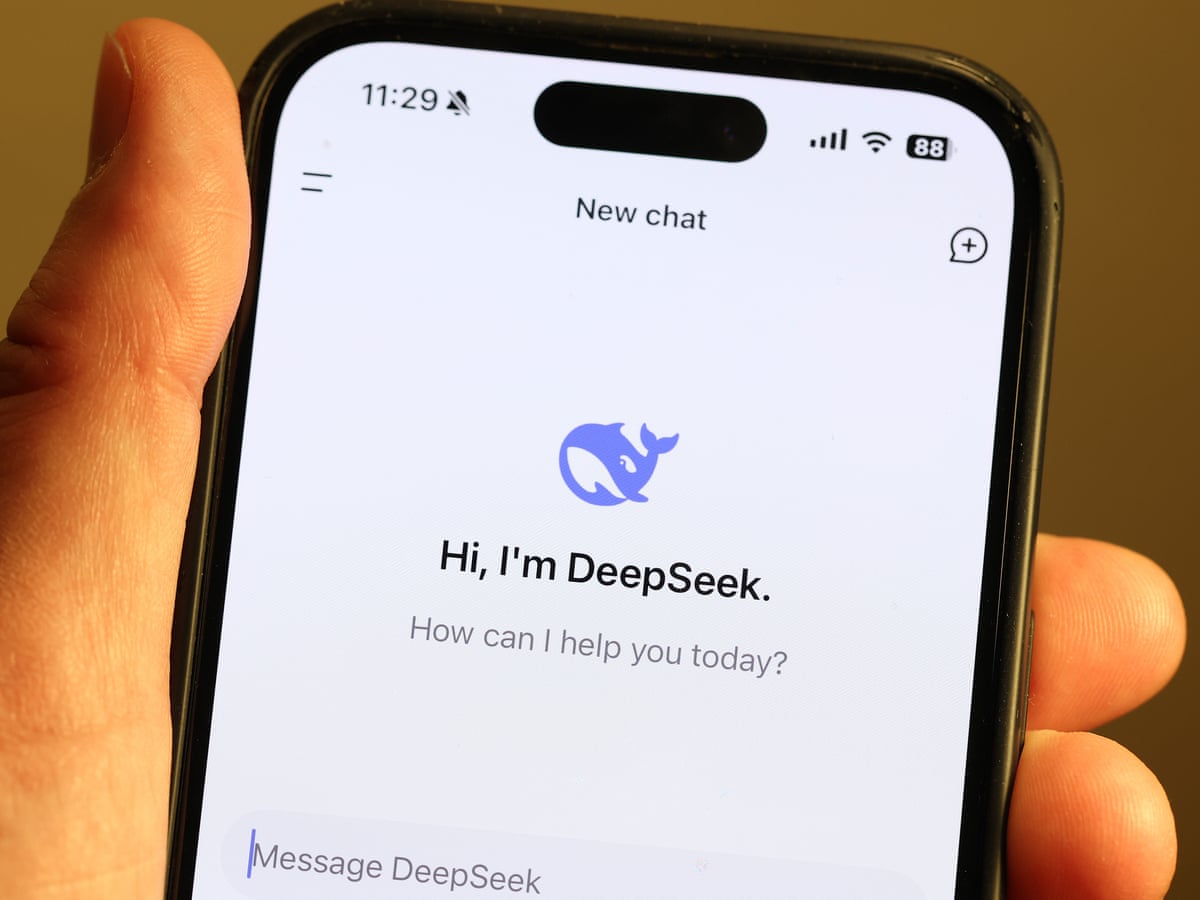

The discharge of DeepSeek-V3 introduced groundbreaking improvements in instruction-following and coding capabilities. Many of us are involved in regards to the power demands and related environmental impression of AI coaching and inference, and it is heartening to see a improvement that would result in more ubiquitous AI capabilities with a much decrease footprint. DeepSeek is the name of a Free DeepSeek v3 AI-powered chatbot, which appears, feels and works very very like ChatGPT. The DeepSeek chatbot, referred to as R1, responds to consumer queries similar to its U.S.-based counterparts. It's not straightforward to find an app that gives accurate and AI-powered search outcomes for research, news, and normal queries. This app gives actual-time search outcomes throughout multiple classes, together with expertise, science, news, and general queries. 3. Select the official app from the search results (search for the DeepSeek AI logo). Open the app and use DeepSeek APP for fast and AI-powered search results. Intuitive Interface: A clear and simple-to-navigate UI ensures customers of all talent levels can make the a lot of the app. Whether you might be looking for breaking information, research papers, or trending topics, the app ensures you get the most recent and dependable content material.

The discharge of DeepSeek-V3 introduced groundbreaking improvements in instruction-following and coding capabilities. Many of us are involved in regards to the power demands and related environmental impression of AI coaching and inference, and it is heartening to see a improvement that would result in more ubiquitous AI capabilities with a much decrease footprint. DeepSeek is the name of a Free DeepSeek v3 AI-powered chatbot, which appears, feels and works very very like ChatGPT. The DeepSeek chatbot, referred to as R1, responds to consumer queries similar to its U.S.-based counterparts. It's not straightforward to find an app that gives accurate and AI-powered search outcomes for research, news, and normal queries. This app gives actual-time search outcomes throughout multiple classes, together with expertise, science, news, and general queries. 3. Select the official app from the search results (search for the DeepSeek AI logo). Open the app and use DeepSeek APP for fast and AI-powered search results. Intuitive Interface: A clear and simple-to-navigate UI ensures customers of all talent levels can make the a lot of the app. Whether you might be looking for breaking information, research papers, or trending topics, the app ensures you get the most recent and dependable content material.

The DeepSeek iOS app globally disables App Transport Security (ATS) which is an iOS platform stage protection that prevents delicate knowledge from being sent over unencrypted channels. Explore the DeepSeek App, a revolutionary AI platform developed by DeepSeek Technologies, headquartered in Hangzhou, China. California-primarily based Nvidia’s H800 chips, which have been designed to adjust to US export controls, were freely exported to China until October 2023, when the administration of then-President Joe Biden added them to its listing of restricted gadgets. That was in October 2023, which is over a yr ago (loads of time for AI!), but I think it is value reflecting on why I assumed that and what's modified as well. "DeepSeek represents a new era of Chinese tech firms that prioritize long-time period technological development over quick commercialization," says Zhang. Liang, whose low-value chatbot has vaulted China close to the highest of the race for AI supremacy, attended a closed-door business symposium hosted by Chinese Premier Li Qiang last month. DeepSeek v3's AI fashions had been developed amid United States sanctions on China and different international locations restricting access to chips used to train LLMs. At the large scale, we train a baseline MoE model comprising roughly 230B total parameters on around 0.9T tokens.

The DeepSeek iOS app globally disables App Transport Security (ATS) which is an iOS platform stage protection that prevents delicate knowledge from being sent over unencrypted channels. Explore the DeepSeek App, a revolutionary AI platform developed by DeepSeek Technologies, headquartered in Hangzhou, China. California-primarily based Nvidia’s H800 chips, which have been designed to adjust to US export controls, were freely exported to China until October 2023, when the administration of then-President Joe Biden added them to its listing of restricted gadgets. That was in October 2023, which is over a yr ago (loads of time for AI!), but I think it is value reflecting on why I assumed that and what's modified as well. "DeepSeek represents a new era of Chinese tech firms that prioritize long-time period technological development over quick commercialization," says Zhang. Liang, whose low-value chatbot has vaulted China close to the highest of the race for AI supremacy, attended a closed-door business symposium hosted by Chinese Premier Li Qiang last month. DeepSeek v3's AI fashions had been developed amid United States sanctions on China and different international locations restricting access to chips used to train LLMs. At the large scale, we train a baseline MoE model comprising roughly 230B total parameters on around 0.9T tokens.

- 이전글9 Funny Deepseek Chatgpt Quotes 25.02.18

- 다음글12 Facts About Buy A Real Driving License To Make You Take A Look At Other People 25.02.18

댓글목록

등록된 댓글이 없습니다.