Seven Extra Reasons To Be Enthusiastic about Deepseek

페이지 정보

본문

DeepSeek (Chinese: 深度求索; pinyin: Shēndù Qiúsuǒ) is a Chinese synthetic intelligence firm that develops open-supply large language fashions (LLMs). Sam Altman, CEO of OpenAI, final yr stated the AI trade would need trillions of dollars in funding to support the development of excessive-in-demand chips needed to power the electricity-hungry information centers that run the sector’s complicated fashions. The analysis reveals the power of bootstrapping fashions through artificial information and getting them to create their own coaching data. AI is a energy-hungry and value-intensive know-how - a lot so that America’s most highly effective tech leaders are buying up nuclear power companies to provide the required electricity for his or her AI models. deepseek ai china may present that turning off access to a key expertise doesn’t necessarily imply the United States will win. Then these AI methods are going to be able to arbitrarily entry these representations and bring them to life.

DeepSeek (Chinese: 深度求索; pinyin: Shēndù Qiúsuǒ) is a Chinese synthetic intelligence firm that develops open-supply large language fashions (LLMs). Sam Altman, CEO of OpenAI, final yr stated the AI trade would need trillions of dollars in funding to support the development of excessive-in-demand chips needed to power the electricity-hungry information centers that run the sector’s complicated fashions. The analysis reveals the power of bootstrapping fashions through artificial information and getting them to create their own coaching data. AI is a energy-hungry and value-intensive know-how - a lot so that America’s most highly effective tech leaders are buying up nuclear power companies to provide the required electricity for his or her AI models. deepseek ai china may present that turning off access to a key expertise doesn’t necessarily imply the United States will win. Then these AI methods are going to be able to arbitrarily entry these representations and bring them to life.

Start Now. Free access to DeepSeek-V3. Synthesize 200K non-reasoning data (writing, factual QA, self-cognition, translation) using DeepSeek-V3. Obviously, given the current legal controversy surrounding TikTok, there are concerns that any data it captures might fall into the palms of the Chinese state. That’s even more shocking when contemplating that the United States has labored for years to limit the supply of high-energy AI chips to China, citing nationwide security concerns. Nvidia (NVDA), the main provider of AI chips, whose inventory greater than doubled in each of the past two years, fell 12% in premarket trading. That they had made no attempt to disguise its artifice - it had no defined options apart from two white dots where human eyes would go. Some examples of human information processing: When the authors analyze cases where people need to process info very quickly they get numbers like 10 bit/s (typing) and 11.8 bit/s (competitive rubiks cube solvers), or need to memorize large quantities of data in time competitions they get numbers like 5 bit/s (memorization challenges) and 18 bit/s (card deck). China's A.I. laws, equivalent to requiring client-facing know-how to comply with the government’s controls on information.

Why this issues - where e/acc and true accelerationism differ: e/accs suppose humans have a brilliant future and are principal agents in it - and something that stands in the best way of humans utilizing know-how is unhealthy. Liang has turn out to be the Sam Altman of China - an evangelist for AI know-how and funding in new research. The company, based in late 2023 by Chinese hedge fund manager Liang Wenfeng, is certainly one of scores of startups that have popped up in current years searching for big investment to experience the large AI wave that has taken the tech trade to new heights. Nobody is admittedly disputing it, however the market freak-out hinges on the truthfulness of a single and comparatively unknown firm. What we understand as a market primarily based economy is the chaotic adolescence of a future AI superintelligence," writes the creator of the analysis. Here’s a nice analysis of ‘accelerationism’ - what it's, where its roots come from, and what it means. And it is open-source, which means different firms can take a look at and construct upon the mannequin to enhance it. DeepSeek subsequently released DeepSeek-R1 and DeepSeek-R1-Zero in January 2025. The R1 model, not like its o1 rival, is open supply, which signifies that any developer can use it.

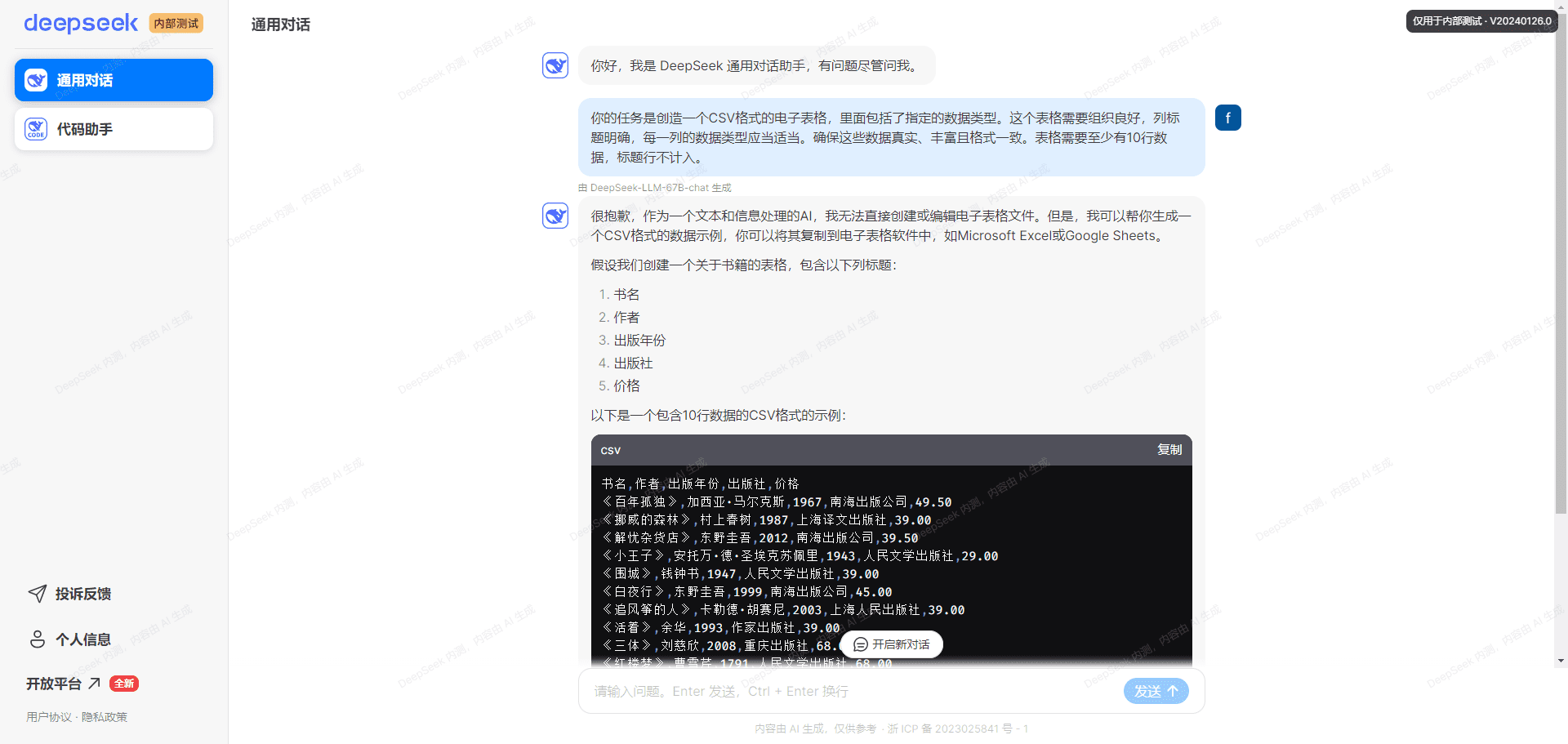

On 29 November 2023, DeepSeek released the DeepSeek-LLM series of models, with 7B and 67B parameters in both Base and Chat kinds (no Instruct was released). We launch the DeepSeek-Prover-V1.5 with 7B parameters, together with base, SFT and RL fashions, to the general public. For all our models, the maximum technology length is ready to 32,768 tokens. Note: All fashions are evaluated in a configuration that limits the output size to 8K. Benchmarks containing fewer than one thousand samples are tested multiple times utilizing various temperature settings to derive strong final results. Google's Gemma-2 model makes use of interleaved window attention to scale back computational complexity for lengthy contexts, alternating between native sliding window consideration (4K context length) and international attention (8K context length) in every different layer. Reinforcement Learning: The model makes use of a more subtle reinforcement studying method, together with Group Relative Policy Optimization (GRPO), which makes use of suggestions from compilers and take a look at cases, and a realized reward mannequin to tremendous-tune the Coder. OpenAI CEO Sam Altman has said that it price greater than $100m to train its chatbot GPT-4, while analysts have estimated that the model used as many as 25,000 more advanced H100 GPUs. First, they tremendous-tuned the DeepSeekMath-Base 7B model on a small dataset of formal math problems and their Lean 4 definitions to obtain the preliminary model of deepseek ai-Prover, their LLM for proving theorems.

When you loved this informative article and you would want to receive details relating to deep seek kindly visit our own web site.

- 이전글우리의 역사: 지난 날들의 유산 25.02.01

- 다음글ขั้นตอนการทดลองเล่น Co168 ฟรี 25.02.01

댓글목록

등록된 댓글이 없습니다.