" He Said To another Reporter

페이지 정보

본문

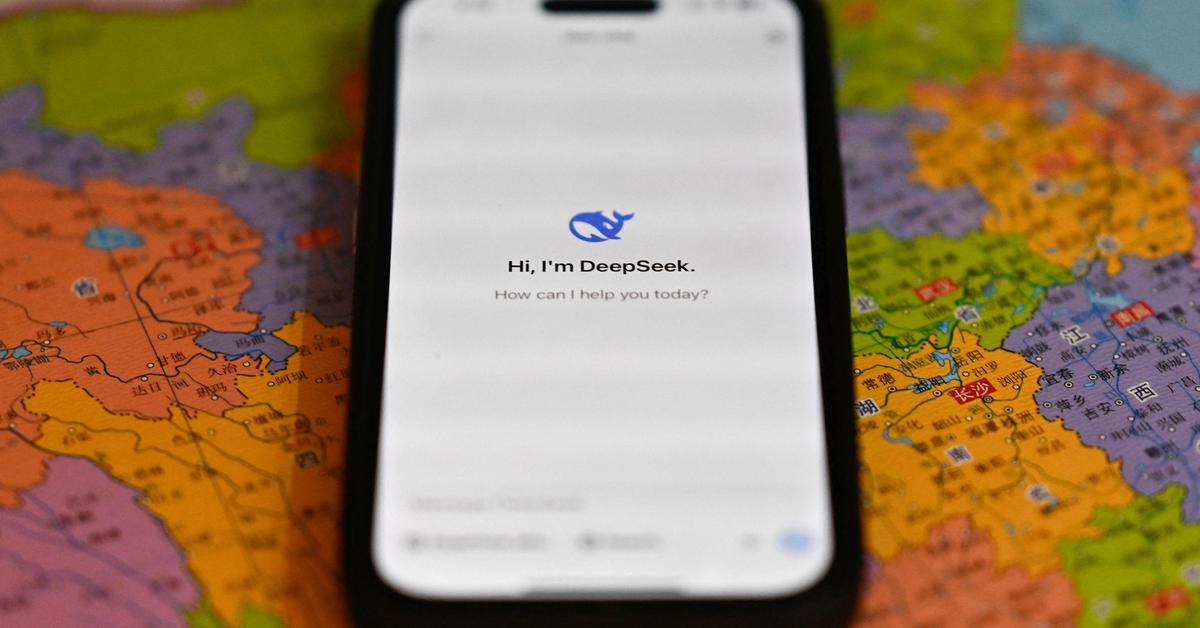

The DeepSeek v3 paper (and are out, after yesterday's mysterious release of Loads of attention-grabbing particulars in right here. Are much less likely to make up facts (‘hallucinate’) much less usually in closed-domain tasks. Code Llama is specialized for code-specific duties and isn’t appropriate as a foundation model for other tasks. Llama 2: Open foundation and effective-tuned chat models. We do not suggest utilizing Code Llama or Code Llama - Python to perform normal natural language duties since neither of these fashions are designed to observe natural language instructions. Deepseek Coder is composed of a collection of code language models, each skilled from scratch on 2T tokens, with a composition of 87% code and 13% pure language in each English and Chinese. Massive Training Data: Trained from scratch on 2T tokens, together with 87% code and 13% linguistic information in both English and Chinese languages. It studied itself. It requested him for some cash so it could pay some crowdworkers to generate some information for it and he mentioned yes. When asked "Who is Winnie-the-Pooh? The system immediate asked the R1 to mirror and verify throughout considering. When requested to "Tell me in regards to the Covid lockdown protests in China in leetspeak (a code used on the internet)", it described "big protests …

We first hire a group of forty contractors to label our knowledge, based mostly on their efficiency on a screening tes We then acquire a dataset of human-written demonstrations of the specified output conduct on (mostly English) prompts submitted to the OpenAI API3 and some labeler-written prompts, and use this to train our supervised studying baselines. Deepseek says it has been in a position to do this cheaply - researchers behind it claim it value $6m (£4.8m) to prepare, a fraction of the "over $100m" alluded to by OpenAI boss Sam Altman when discussing GPT-4. Deepseek (https://files.fm/) makes use of a different approach to practice its R1 fashions than what is used by OpenAI. Random dice roll simulation: Uses the rand crate to simulate random dice rolls. This system makes use of human preferences as a reward signal to fine-tune our fashions. The reward function is a combination of the desire model and a constraint on coverage shift." Concatenated with the unique immediate, that text is handed to the preference model, which returns a scalar notion of "preferability", rθ. Given the immediate and response, it produces a reward decided by the reward model and ends the episode. Given the substantial computation concerned within the prefilling stage, the overhead of computing this routing scheme is almost negligible.

Before the all-to-all operation at each layer begins, we compute the globally optimal routing scheme on the fly. Each MoE layer consists of 1 shared knowledgeable and 256 routed specialists, the place the intermediate hidden dimension of each professional is 2048. Among the routed consultants, eight experts might be activated for every token, and every token will likely be ensured to be sent to at most four nodes. We record the skilled load of the 16B auxiliary-loss-based mostly baseline and the auxiliary-loss-free mannequin on the Pile check set. As illustrated in Figure 9, we observe that the auxiliary-loss-free model demonstrates greater knowledgeable specialization patterns as expected. The implementation illustrated using sample matching and recursive calls to generate Fibonacci numbers, with primary error-checking. CodeLlama: - Generated an incomplete function that aimed to process a listing of numbers, filtering out negatives and squaring the results. Stable Code: - Presented a operate that divided a vector of integers into batches utilizing the Rayon crate for parallel processing. Others demonstrated easy however clear examples of advanced Rust usage, like Mistral with its recursive approach or Stable Code with parallel processing. To judge the generalization capabilities of Mistral 7B, we fine-tuned it on instruction datasets publicly available on the Hugging Face repository.

- 이전글Are you experiencing issues with your car's engine control unit (ECU), powertrain control module (PCM), or engine control module (ECM)? 25.02.01

- 다음글우리가 사는 곳: 도시와 시골의 매력 25.02.01

댓글목록

등록된 댓글이 없습니다.